What to Do When a Self-Driving Car Fails in an Emergency

Every article on this site is researched by our internal team, reviewed for legal accuracy against current Texas law, and held to State Bar of Texas advertising standards before publication. We do not publish content that overstates outcomes or makes promises about results.

Learn more about our

editorial standards .

Key Takeaways

- Texas law defines "operator" differently when an automated driving system is engaged, which affects who may be liable after a crash.

- Automated vehicles must attempt a "minimal risk condition" when they can't continue normal operation, and whether they do so appropriately can determine fault.

- Vehicle logs, sensor data, and remote assistance records provide critical evidence that may disappear without prompt preservation efforts.

You were driving home on Research Boulevard when a Waymo ahead of you stopped in the middle lane. Emergency lights flashed in your rearview mirror. The ambulance couldn’t get through. You swerved to avoid the stopped vehicle and collided with another car.

Now you’re dealing with a sore neck, vehicle damage, and questions about who’s responsible when a self-driving car fails to respond like a human driver would.

Automated vehicles are becoming more common on Texas roads. When they work correctly, they follow traffic laws and yield to emergency vehicles. When they don’t, the consequences can be serious.

Understanding how these systems are supposed to behave, why they sometimes fail, and what Texas law requires can help you pursue a claim if you’re involved in a crash after a self-driving car acts erratically in an emergency.

How Self-Driving Systems Are Supposed to Handle Emergencies

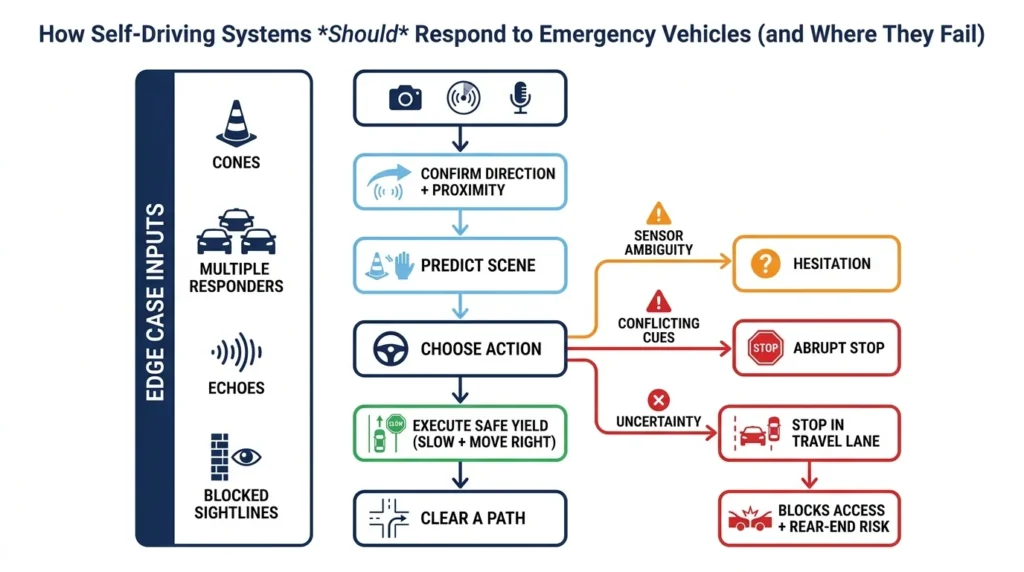

Automated driving systems are designed to detect emergency vehicles through cameras, radar, and sometimes microphones. When the system recognizes flashing lights or sirens, it should yield safely by slowing down, moving to the right, and clearing a path.

The reality is more complicated. Emergency scenes combine unpredictable human behavior, temporary traffic patterns, and split-second decisions. These “edge cases” push automated systems to their limits.

Common challenges include:

- Multiple emergency vehicles approaching from different directions

- Officers directing traffic contrary to signal lights

- Temporary cones and flare patterns that conflict with lane markings

- Sirens echoing off buildings in urban areas like Downtown Austin or the Galleria area in Houston

- Blocked sightlines from trucks or other large vehicles

When sensors can’t clearly identify what’s happening, the system may hesitate, stop unexpectedly, or fail to yield appropriately. A vehicle that stops in a travel lane instead of pulling to the shoulder can block emergency access and create rear-end collision risks for following drivers.

Key Terms That Affect Liability

Before discussing fault, you need to understand how Texas law distinguishes between different types of automated systems.

Automated Driving System (ADS) refers to technology capable of performing the entire driving task without human supervision. These are the “Level 4” and “Level 5” systems used in robotaxis operating in cities like Austin and Houston.

Advanced Driver Assistance Systems (ADAS) include features like adaptive cruise control, lane-keeping assist, and automatic emergency braking. These systems require a human driver to remain attentive and ready to take control. Most vehicles with “autopilot” or “self-driving” marketing actually use ADAS, not full ADS.

Under Texas Transportation Code Chapter 545, the definition of “operator” can shift when an ADS is engaged. This affects who receives traffic citations and who may be liable for civil damages. The person behind the wheel isn’t always the one Texas law holds responsible.

Texas’s Automated Vehicle Framework

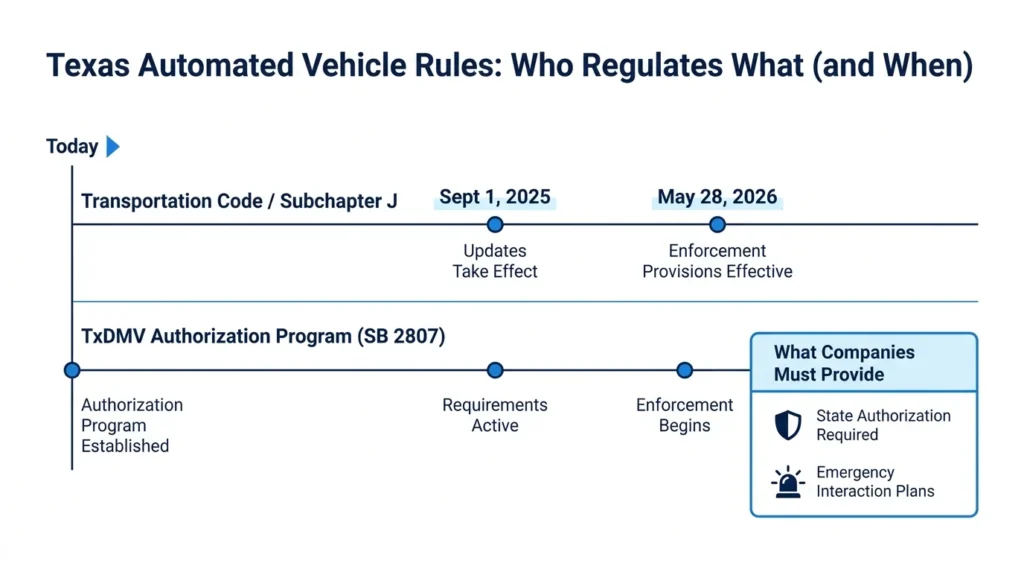

Texas regulates automated vehicles through two main channels: Transportation Code requirements and the TxDMV authorization program established by SB 2807.

The TxDMV Autonomous Vehicles Regulatory Program requires companies operating automated vehicles to obtain state authorization. This includes submitting emergency interaction plans that explain how their vehicles will respond to first responders, emergency scenes, and law enforcement.

Subchapter J of the Transportation Code, with updates effective September 1, 2025, establishes requirements for automated motor vehicles operating on Texas roads. The TxDMV program includes enforcement provisions becoming effective May 28, 2026.

What “Minimal Risk Condition” Means

When an automated vehicle can’t continue normal operation, it should achieve what Texas law calls a minimal risk condition. This means reaching a safer state, such as slowing down and moving out of active travel lanes.

The concept sounds simple. The execution often isn’t. A vehicle that stops on a curve, blocks an intersection, or parks in a travel lane has instead become a new hazard.

Whether a vehicle attempted an appropriate minimal-risk response becomes a key question after crashes. Did the system recognize it needed to stop? Did it choose a safe location? Was the company’s programming reasonable for foreseeable emergency scenarios?

Recording Devices & Available Evidence

Texas law includes requirements for recording devices in automated vehicles. These systems may capture:

- Whether the ADS was engaged at the time of the crash

- Sensor data showing what the vehicle “saw”

- Takeover requests or warnings issued to any safety driver

- Remote assistance communications

- Speed, steering, and braking inputs

Federal requirements also apply. NHTSA’s Standing General Order requires manufacturers to report certain ADS and ADAS crashes. This framework means companies may have already collected and preserved data about incidents involving their vehicles.

This evidence can prove what happened in ways that witness statements and police reports cannot. It can also disappear quickly if not preserved through proper legal channels.

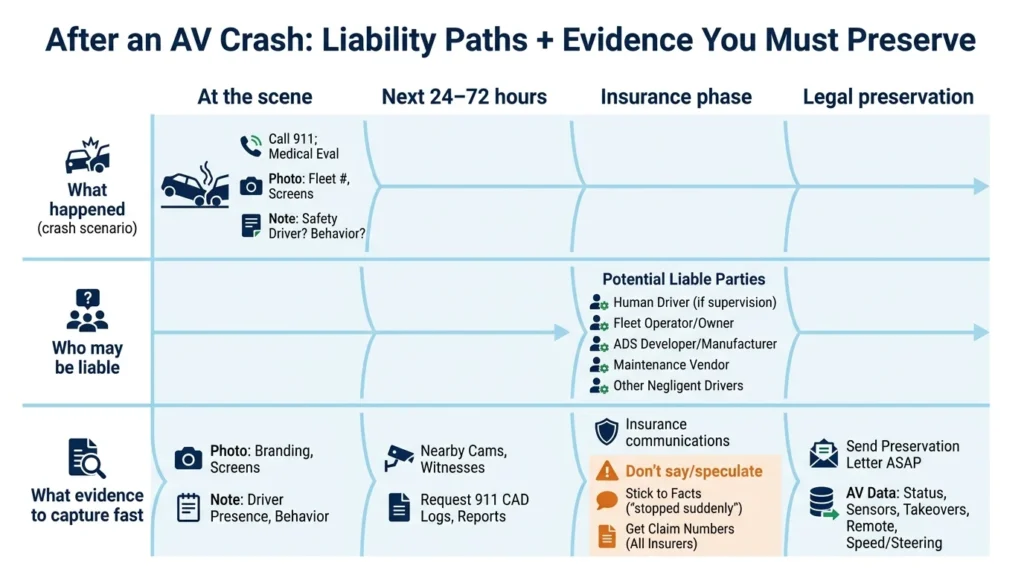

Who Can Be Liable After a Self-Driving Car Crash

Liability in automated vehicle crashes depends on multiple factors. Potential responsible parties include:

- The human driver (if one was present and required to supervise)

- The vehicle owner or fleet operator

- The ADS developer or manufacturer

- Maintenance vendors responsible for sensor calibration or software updates

- Other negligent drivers involved in the crash

Key questions investigators and insurers will examine:

- Was the ADS engaged at the moment of impact?

- Did the system issue a takeover request the driver ignored?

- Did the vehicle attempt an appropriate minimal risk condition?

- Was the vehicle authorized to operate under Texas’s program?

- Did the company’s emergency interaction plan address this scenario?

When a robotaxi blocks an ambulance or fails to yield to a fire truck on Mopac in Austin, the resulting delays can cause additional harm. Secondary crashes, delayed medical treatment, and chain-reaction collisions create complex causation questions that require careful documentation.

Product Liability vs. Negligence Claims

Two main legal theories apply to automated vehicle crashes.

Negligence claims focus on unreasonable conduct: unsafe operation, failure to monitor when required, inadequate fleet management, or insufficient emergency procedures.

Product liability claims focus on the technology itself: design defects that made the system unreasonably dangerous, inadequate warnings about limitations, or failure to address foreseeable emergency scenarios.

Both theories may apply in the same case. A company might be negligent in how it deployed vehicles while also having designed a system with defects. Preserving vehicle logs, sensor data, and internal communications often matters more in these cases than in typical car accidents involving only human drivers.

What to Do After an Automated Vehicle Crash

Protecting your claim requires immediate action and attention to AV-specific evidence.

At the scene:

- Call 911 and accept medical evaluation

- Document the vehicle’s branding, fleet number, and any visible company logos

- Note whether a safety driver was present

- Take photos of dashboard indicators or screens

- Screenshot any rideshare app showing your trip

- Record the vehicle’s behavior after the crash (did it stay stopped, try to drive away, display any messages?)

Preserve third-party evidence:

- Identify nearby businesses with security cameras

- Get contact information from witnesses who saw the vehicle’s behavior

- Request the 911 CAD logs and first responder incident reports

- Ask responding officers to note any unusual vehicle behavior in their report

Insurance communications:

Describe facts without speculation. Saying “the car stopped suddenly” is accurate. Saying “the self-driving system malfunctioned” is a conclusion you can’t verify at the scene. Request claim numbers from every involved insurer early, including the fleet operator’s commercial policy.

Do your due diligence and collect important information from the scene, focusing on the basics while adding these AV-specific details.

When to Contact an Attorney

Serious injuries, disputed fault, commercial robotaxi involvement, or concerns about disappearing data all warrant prompt legal consultation. Vehicle logs can be overwritten. Remote assistance recordings may have retention limits. Companies may claim proprietary protections over their data.

A preservation letter sent quickly can prevent evidence destruction. Waiting weeks or months may mean critical information no longer exists.

At Angel Reyes & Associates, we’ve spent over 30 years helping Texans injured in complex crashes. We understand that automated vehicle cases require technical investigation beyond standard accident reconstruction. Our team works with experts who can interpret sensor data, engagement logs, and system behavior.

We offer free consultations and handle cases on contingency, meaning no fee unless we win. With more than 20 locations across Texas and the ability to handle most of your case remotely, we’re accessible wherever you are.

If you’ve been injured in a crash involving a self-driving or semi-autonomous vehicle, contact us to discuss your situation. The evidence that proves what happened may not last forever.

Self-Driving Cars & Emergencies FAQs

Can a self-driving car be ticketed for failing to move over in Texas?

Texas law can treat the automated driving system’s operator differently from the person sitting in the vehicle, so a citation may not tell you who is ultimately civilly responsible for the crash. Traffic enforcement and injury liability often follow different rules.

What if a self-driving car causes a crash without actually hitting anyone?

You may still have a claim if the vehicle’s actions set off a chain-reaction wreck, such as forcing another driver to swerve or brake suddenly. In those cases, timing, video, witness statements, and dispatch records can be especially important.

Are black box records the same as self-driving car data?

Not necessarily. A standard event data recorder may capture speed and braking, while an automated vehicle may also store system-status logs, sensor information, camera footage, and remote-support records.

Can weather affect how a self-driving car responds in an emergency?

Yes. Heavy rain, fog, glare, dust, or dirty sensors can reduce what the system detects and may affect how safely it responds to sirens, lane shifts, or sudden obstacles.

Do Texas self-driving car rules apply only to fully driverless vehicles?

No. Some Texas rules focus on automated motor vehicles, but crashes involving partially automated features can still raise similar fault and evidence questions. The key issue is often how much control the human driver still had and what the system was designed to do.