Who Is the “Driver” in a Texas Self-Driving Crash?

Every article on this site is researched by our internal team, reviewed for legal accuracy against current Texas law, and held to State Bar of Texas advertising standards before publication. We do not publish content that overstates outcomes or makes promises about results.

Learn more about our

editorial standards .

Key Takeaways

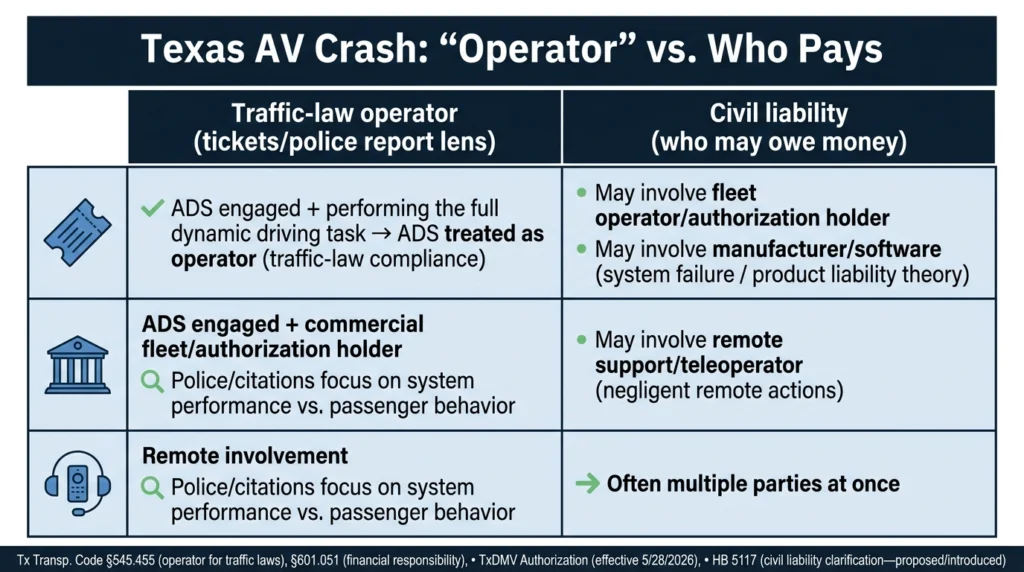

- Texas law treats the Automated Driving System or the authorization holder as the "operator" rather than any human occupant when ADS is engaged.

- Remote assistance and teleoperation are different, and proving which occurred requires preserving session logs, timestamps, and operator records quickly.

- Multiple parties may be liable after an AV crash, including the fleet operator, remote support vendors, and the vehicle or software manufacturer.

Multiple Parties Could Be Liable for Damages

You were rear-ended at a red light in Lower Greenville last week. The car that hit you had no one behind the wheel. Now you’re dealing with injuries, vehicle damage, and a company representative who keeps talking about “the system” instead of answering your questions about who was actually responsible.

Autonomous vehicle crashes in Dallas-Fort Worth are often difficult to navigate without the right information and assistance.

Why You Need to Determine Who the Operator Was

Texas autonomous vehicle cases often turn on one practical question: who had legal responsibility for operating the vehicle when the Automated Driving System was engaged? This matters whether you were hit by a robotaxi in Deep Ellum, an autonomous delivery vehicle in Plano, or a self-driving truck on I-35E.

The answer affects everything. It determines who gets blamed in the police report, who receives traffic citations, whose insurance pays, and who you can sue for compensation in a personal injury claim.

In traditional car accidents, identifying the at-fault driver is usually straightforward. AV crashes add layers of complexity because “the driver” might be a software system, a remote support operator hundreds of miles away, or a company that authorized the vehicle to operate.

Texas’s Statutory Framework: Who the Law Treats as the “Operator”

Texas Transportation Code § 545.451(Subchapter J) provides the rules for vehicles where an Automated Driving System (ADS) is engaged. However, significant legislative updates in 2025 and 2026 have added layers of accountability that every accident victim must understand.

The ADS as the “Traffic Law Operator”

Under Texas Transportation Code § 545.455, when an ADS is engaged and performing the entire dynamic driving task, the system itself is considered the operator for the purpose of assessing compliance with traffic laws. This means if a robotaxi runs a red light, the law looks at the software’s performance, not the passenger’s actions.

The Mandatory “Authorization Holder” (New for 2026)

As of May 28, 2026, any company operating autonomous vehicles for commercial purposes in Texas, such as robotaxis or delivery fleets, must hold a formal Automated Motor Vehicle Authorization from the Texas Department of Motor Vehicles (TxDMV).

To maintain this authorization, companies must certify that their vehicles:

- Are capable of achieving a “minimal risk condition” (pulling over safely) if the system fails.

- Are equipped with a recording device (black box) to capture crash data.

- Comply with all federal safety standards and state insurance requirements.

The Law Enforcement Interaction Plan

Texas law also requires these companies to file a specific Interaction Plan with the Department of Public Safety (DPS). This plan dictates how the vehicle should communicate with police, firefighters, and EMS at a crash scene.

If you were hit by an AV in Dallas, your attorney should immediately verify if the company’s “real-world” performance at the scene matched their filed safety plan.

Operator Status vs. Civil Liability (HB 5117)

While the Transportation Code identifies the “operator” for tickets, the 89th Texas Legislature has introduced House Bill 5117 to further clarify civil collision liability. This bill seeks to explicitly designate the ADS manufacturer as the responsible party for damages when a collision is caused by a failure of the automated system, rather than the fleet owner or human occupant.

Because these laws are evolving rapidly, “who was driving” is no longer just a factual question – it’s a high-stakes legal determination involving state permits, manufacturer software logs, and specific insurance layers.

Remote Assistance vs. Teleoperation

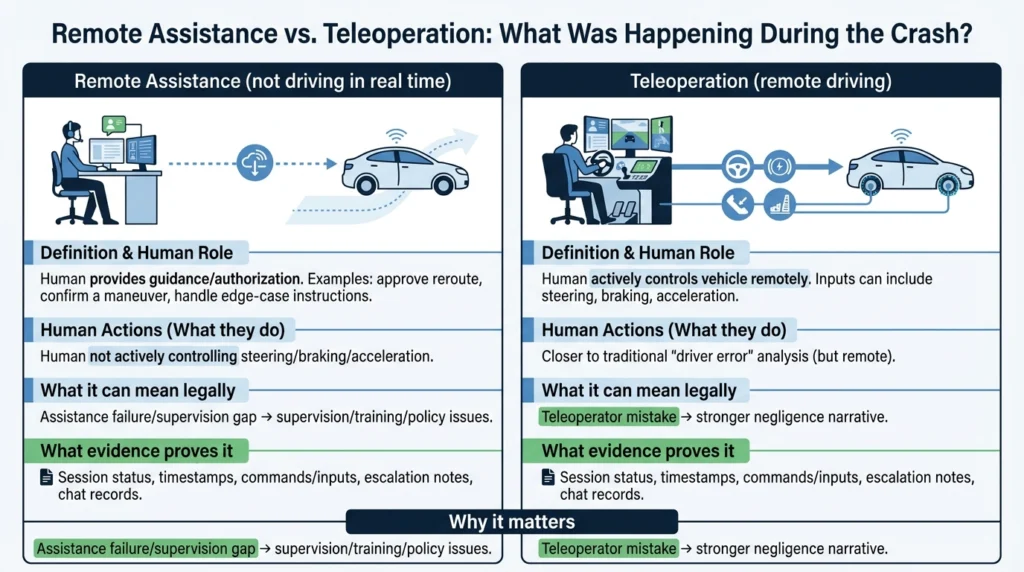

Many autonomous vehicle programs use remote support, but that doesn’t mean someone was “driving” in real time. Understanding the difference can change your entire fault analysis.

Remote assistance involves a human providing guidance or authorization to the vehicle. They might approve a route change or help the system navigate an unusual situation, but they’re not controlling steering, braking, or acceleration in real time.

Teleoperation means a human is actively controlling the vehicle remotely, essentially driving it from a distance.

The common misconception that “there’s always a remote driver steering it” isn’t necessarily true. A Senate investigation into autonomous vehicle companies revealed serious safety gaps and a lack of transparency in remote assistance operator practices. This matters because if you assume remote control existed when it didn’t, you might miss the actual source of the failure.

Types of Evidence That Can Prove Remote Involvement

Remote involvement evidence can support or undercut different legal theories depending on what happened. If a teleoperator made a bad decision, that supports a negligence claim against the company. If remote assistance failed to intervene when it should have, that might support a supervision claim.

If remote support was active during your crash, specific records can prove it.

These include:

- Remote assistance session logs with timestamps.

- Teleoperator inputs and commands.

- Internal tickets and escalation notes.

- Chat logs between the vehicle system and support staff.

- Supervisor approvals for route changes or maneuvers.

Send a preservation letter immediately. Data may be overwritten on short retention schedules. Some companies only keep detailed logs for days or weeks. Waiting too long can mean critical evidence disappears permanently.

Liability in a DFW AV Crash: Who You May Be Able to Sue

Most autonomous vehicle cases involve multiple potentially responsible parties.

Your “party map” might include:

- Other human drivers that were involved in the crash.

- The fleet operator or authorization holder.

- Remote support vendors.

- The vehicle owner (if different from the operator).

- Maintenance contractors.

- The vehicle manufacturer and software companies.

Two main legal paths exist. Negligence-based claims focus on how the vehicle was operated, maintained, or supervised. Product liability claims focus on defects in the vehicle’s design, manufacturing, or warnings.

Even if the ADS was “driving,” companies can still face civil exposure for supervision failures, deployment decisions, maintenance lapses, training deficiencies, mapping errors, and remote-support practices.

When You Can Sue the Manufacturer

You can potentially sue the manufacturer or software company if evidence supports a product-liability theory.

You may be able to seek compensation from the manufacturer due to:

- Alleged perception or planning errors by the ADS.

- Sensor failures that caused the system to miss obstacles.

- Known defect patterns the company failed to address.

- Inadequate warnings or instructions to users.

- Unsafe software updates.

Proof often requires versioning data, update history, disengagement logs, prior incident reports, and internal investigations. Multiple vendors may share responsibility, and expert review is usually required to interpret technical evidence.

When the Fleet Operator Is Liable

For robotaxi or autonomous truck operations, the fleet operator or authorization holder is often the most direct compensation path.

You may be eligible for compensation from the fleet operator due to:

- Deploying vehicles in unsafe areas or conditions.

- Inadequate remote support staffing.

- Poor training or supervision of safety drivers.

- Maintenance lapses affecting sensors or systems.

- Negligent dispatch decisions.

Insurance Implications After an AV Crash

AV cases can involve layered insurance coverage. Texas Transportation Code § 601.051 establishes financial responsibility requirements, including minimum liability coverage amounts that apply to vehicles operating on Texas roads.

You may have multiple claim paths available. A third-party liability claim goes against the at-fault party’s insurance. First-party claims through your own PIP, MedPay, or UM/UIM coverage may also apply.

Common friction points include insurers blaming “the technology,” delays while data is reviewed, and disputes over who the operator was. Preserve evidence while claims are pending because insurers may use data gaps against you.

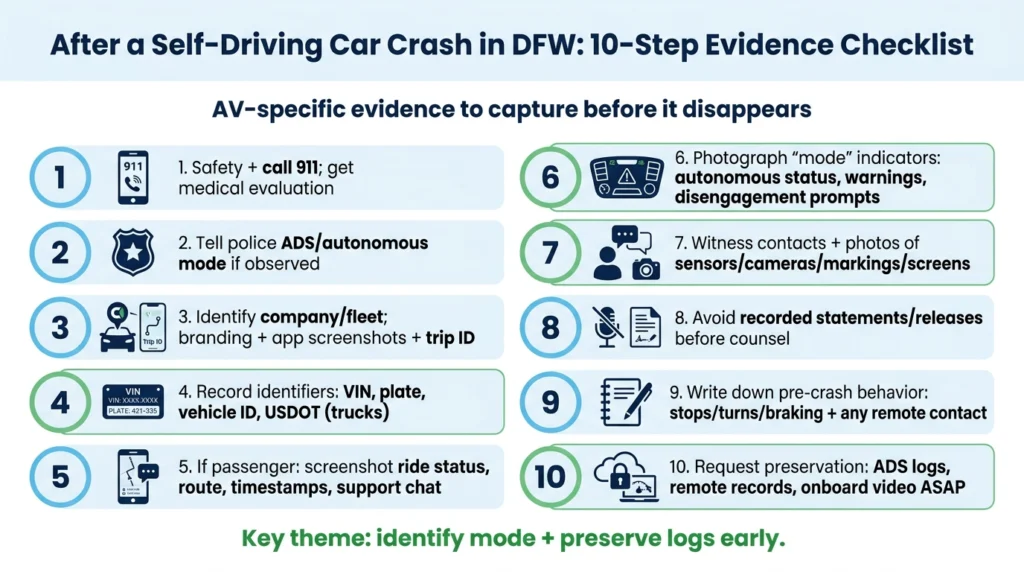

What to Do After an Accident With a Self-Driving Car in DFW

AV crashes add technical evidence challenges and multi-party liability questions that can overwhelm standard accident claims. Early strategy matters.

If you were involved in a self-driving car accident, take these steps immediately:

- Prioritize safety and call 911. Get a medical evaluation even if injuries seem minor.

- Tell police you believe the vehicle was operating in autonomous mode if that’s what you observed.

- Identify the company or fleet. Note branding, take app screenshots if you were a passenger, and get the trip ID.

- Gather identifiers, including VIN, license plate, company vehicle ID, and USDOT numbers for autonomous trucks.

- Capture app details if you were a robotaxi passenger: screenshots of ride status, route, pickup and drop-off points, timestamps, and any support chat or incident prompts.

- Document “mode” indicators, including any display showing autonomous mode, warnings, disengagement prompts, or “pull over” messages.

- Get witness contacts and photographs of sensors, cameras, vehicle markings, and any interior screen displays.

- Avoid recorded statements or signing releases before consulting an attorney.

- Write down what the vehicle did before the crash: stops, turns, braking behavior, and whether anyone contacted you remotely.

- Request preservation of ADS logs, remote assistance records, and onboard video as quickly as possible.

Talk to a Lawyer Who Understands AV Cases

Legal assistance from an experienced firm can make all the difference in your case. Your attorney can send preservation letters, coordinate with reconstruction experts, identify all responsible entities, interpret Texas operator status rules, and handle insurers who want to blame “the system” instead of paying fair compensation.

Angel Reyes & Associates has over 30 years of experience and has recovered more than $1 billion for clients. We offer free initial consultations, and you pay no fees unless we win. Contact us to discuss your autonomous vehicle crash and learn what evidence you need to protect your claim.

Self-Driving Vehicle Operator FAQs

Does Texas law require a self-driving car company to carry insurance?

Yes. Under Texas law, any vehicle operating with an Automated Driving System (ADS) engaged must be covered by motor vehicle liability insurance or a pre-approved self-insurance plan. However, for commercial operations like robotaxis or autonomous delivery fleets, the rules are significantly more involved than a standard personal policy.

Can a passenger in a robotaxi be held responsible for a crash?

Usually, a paying passenger is not treated like the person driving just because they were inside the vehicle. But a passenger could still become part of the liability picture if they interfered with the vehicle, blocked safety equipment, or created a hazard.

What if the self-driving vehicle company says it needs time to review data before answering questions?

That is common in AV crashes, but delays can make it harder to confirm what mode the vehicle was in and whether remote support was involved. Early requests to preserve logs, video, trip records, and communication records can matter because some data may not be kept for long.

Are autonomous vehicle crash reports public in Texas?

Basic crash report information may be available through normal Texas crash-report channels, but detailed AV data like onboard logs, remote-operation records, and internal incident reviews usually are not handed over at the scene. That information often has to be requested directly or obtained later through the claims process or litigation.

Does a federal crash report mean the self-driving company was at fault?

No. Federal reporting can help show that an ADS-equipped vehicle was involved in a reportable event, but it does not decide legal fault or who must pay damages.