Tesla vs. Waymo Robotaxi Safety: Cameras vs. Lidar

Every article on this site is researched by our internal team, reviewed for legal accuracy against current Texas law, and held to State Bar of Texas advertising standards before publication. We do not publish content that overstates outcomes or makes promises about results.

Learn more about our

editorial standards .

Key Takeaways

- Sensor design choices (camera-only vs sensor fusion) can affect detect-and-avoid performance in intersections, adverse weather, and low-light conditions that are common across Texas.

- Texas's 51% proportionate responsibility rule makes fault allocation critical, and "operator" arguments in automated vehicle cases add complexity.

- Preserving digital evidence quickly is essential because vehicle logs, camera footage, and sensor data are controlled by the robotaxi company and can be overwritten.

You were driving through an intersection in Montrose when a robotaxi ran a red light and struck your vehicle. The company says its cameras didn’t detect you in time. Now, you’re dealing with injuries, a totaled car, and an insurance process that feels nothing like a normal fender-bender.

Self-driving technology is rolling out across Texas cities. How these vehicles “see” the road can shape what happens at the moment of impact, what evidence exists afterward, and how fault gets argued under Texas law.

Why Sensor Choices Are Relevant After a Self-Driving Crash

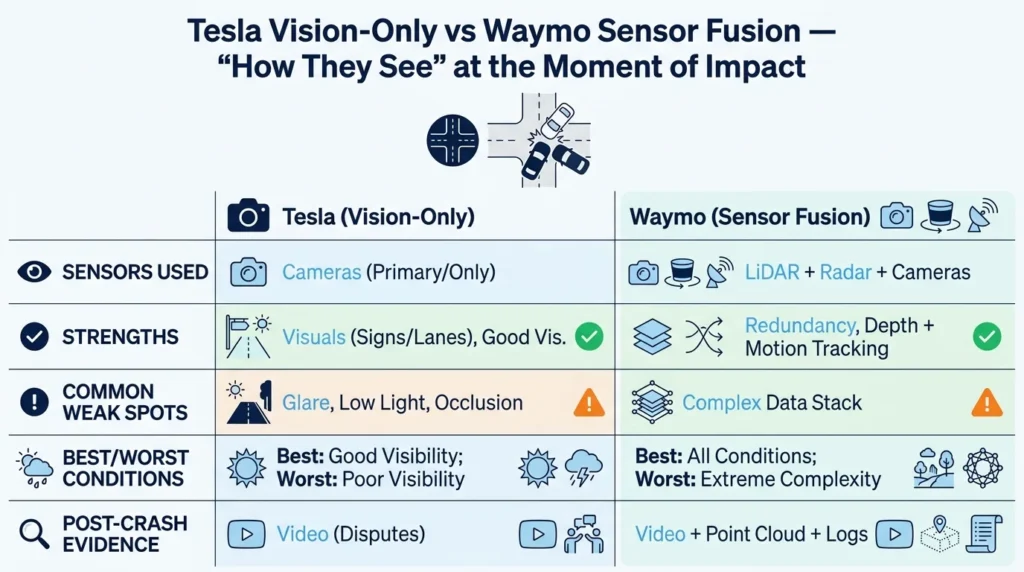

Two main approaches dominate today’s world of autonomous vehicle design. Tesla uses a camera-centric “vision-only” system. Waymo uses sensor fusion that combines LiDAR, radar, and cameras.

This isn’t just a tech debate. These design choices can influence detect-and-avoid performance at intersections, how well the system handles Houston rain or Austin glare, and what digital evidence exists after a collision.

For crash victims, the sensing approach can change the entire claims process. It affects what data you can request, how fault gets allocated under Texas’s 51% rule, and which insurance policies apply when a robotaxi operates like a rideshare platform.

Sensor Stacks 101: Camera-Only vs Sensor Fusion

Let’s discuss the difference between camera-only systems and systems that rely on sensor fusion.

Cameras capture color, texture, lane markings, and signage. They work similarly to human eyes. Unfortunately, that means they can also struggle with glare, low contrast, darkness, and situations in which depth is hard to judge from a flat image.

Sensor fusion layers multiple sensor types that cover overlapping areas. According to Waymo’s safety report, their vehicles use LiDAR for 3D depth mapping, radar for velocity detection, and cameras for visual detail. The idea behind these layers is that when one sensor type is impaired, another can compensate.

LiDAR measures distance by bouncing laser pulses off objects. It creates a 3D map of the environment, regardless of lighting conditions.

Radar detects motion and velocity. It’s commonly described as “more robust” in rain, fog, and low visibility. Waymo’s blog on weather conditions explains how radar and LiDAR provide redundancy when camera visibility drops.

The tradeoff is most obvious in challenging conditions. A camera-only system relies entirely on visual interpretation, but a sensor-fused system has backup pathways even if one sensor fails or degrades.

Intersections & Occlusions: Where Sensor Tradeoffs Take Center Stage

Texas urban driving conditions create repeatable high-risk scenarios: left turns across traffic on Westheimer Road, pedestrians stepping out from between parked cars in the Heights, and cyclists riding alongside tall trucks on Loop 610.

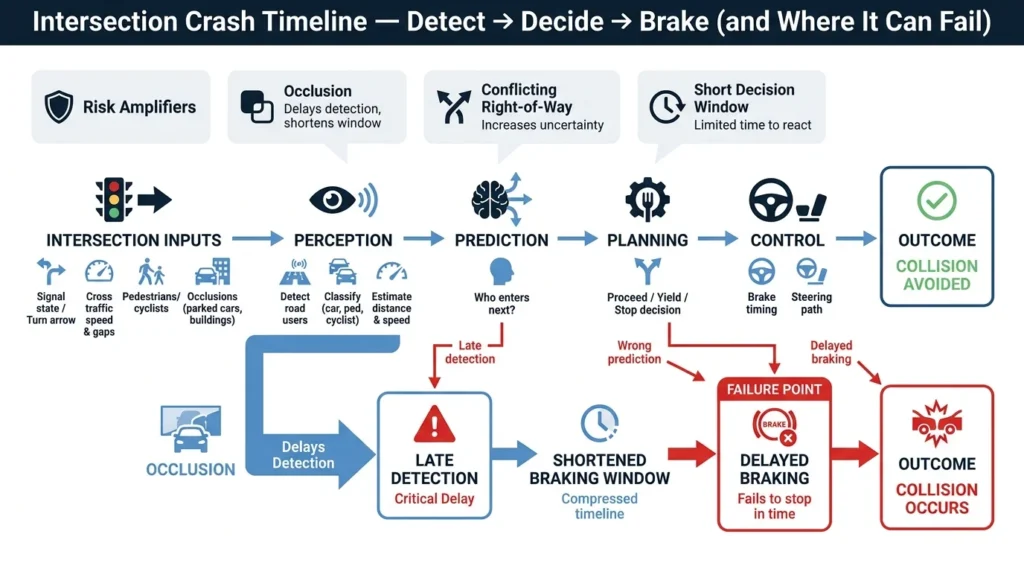

Intersections are challenging because multiple road users converge with conflicting right-of-way, short decision windows, and frequently blocked sightlines.

Left Turns & Crossing Traffic

Unprotected left turns are among the most dangerous maneuvers because the vehicle must judge gaps in oncoming traffic, often with limited visibility.

When a crash happens at an occluded intersection, the challenges include what the system detected, when it detected it, and whether there was time to brake.

Key information includes signal phase, turn arrow status, speed, braking behavior, and any sightline obstructions. Knowing what information to collect immediately after the crash will help strengthen your position.

Pedestrians, Cyclists, & Motorcycles

Vulnerable road users present unique detection challenges. For example, a pedestrian stepping out from between parked cars, a cyclist riding alongside a delivery truck, or a motorcycle obscured in multi-lane traffic on I-45.

Sensor redundancy arguments often center on whether one modality’s weakness could have been covered by another. For example, if a camera couldn’t see a pedestrian in shadow, could radar have detected their movement?

Witness statements and nearby business cameras can be decisive when vehicle data is unavailable. Taking immediate steps to document the scene will help protect this evidence.

Texas Rain, Glare, & Low Light: How Road Conditions Affect Perception

Texas weather can be brutal on visibility: sudden downpours on Beltway 8, blinding sun glare at low angles during rush hour, and nighttime driving with high contrast between headlights and darkness.

Remember, cameras rely on usable illumination and contrast. When a sudden storm hits or the sun sits directly in the camera’s field of view, visual perception can degrade significantly.

What to Document When Weather Is a Factor

- Photos of sky conditions, road sheen, and pooled water

- Windshield wipers and headlight status at the time of impact

- Visibility of lane markings

- 911 timestamps and weather history records

- Your location relative to the sun’s position

These details can become central to fault arguments. Statements such as “the sun was blinding” counts for both human drivers and camera-centric systems.

Evidence in Robotaxi Crashes: What Data Exists

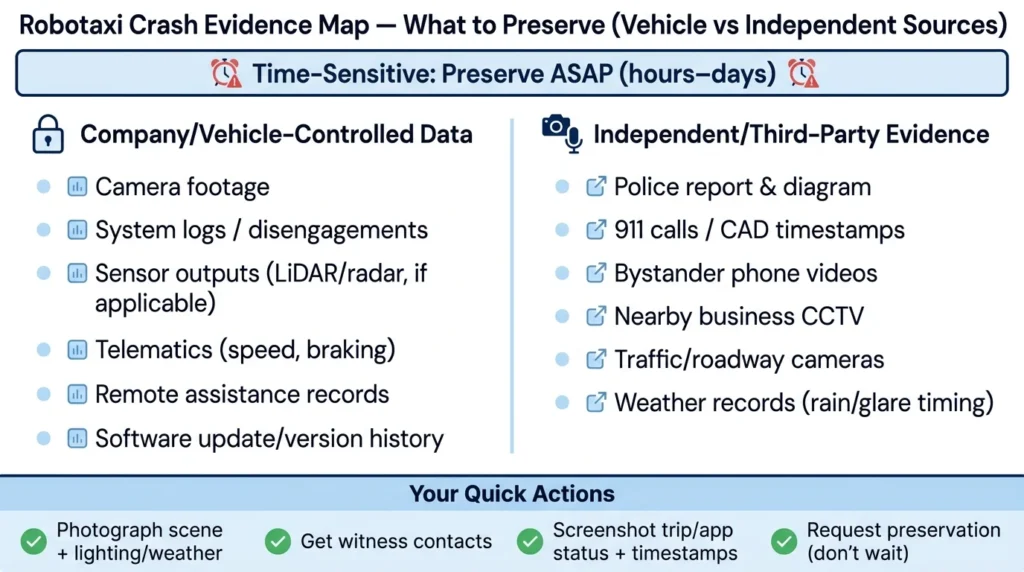

Robotaxi crash claims can hinge on digital evidence that disappears fast.

Vehicle-controlled evidence may include camera footage, system logs, sensor outputs, telematics, remote assistance records, and information about software updates around the incident date.

The challenge: Most critical data is controlled by the company. Preservation letters and fast legal action may be needed to prevent data from being deleted or overwritten.

Non-vehicle sources include police crash reports, 911 audio recordings, bystander videos, nearby business camera footage, and roadway camera footage.

Why Sensor Choices Change the Story

Camera-heavy cases often revolve around video interpretation. Was the pedestrian visible? Did sun glare obscure the signal? Could a human have seen what the camera missed?

Multi-sensor cases may include additional data layers, like LiDAR point clouds or radar tracks. This can provide more corroboration or more complexity, depending on what the evidence produces.

The core questions are:

- Was there time to detect?

- Was there time to brake?

- Should the system have responded sooner?

Fault in Texas: The 51% Rule & “Operator” Arguments

Texas uses proportionate responsibility for car accident claims. Under Texas Civil Practice and Remedies Code Chapter 33, your recovery is reduced by your percentage of fault. If you’re found more than 50% responsible, you recover nothing.

This makes fault allocation critical in every self-driving crash.

Who Is the “Operator”?

Texas Transportation Code Chapter 545 contains provisions that may treat an engaged automated driving system as the “operator” in certain contexts. This affects who gets cited and how responsibility arguments develop.

Potential responsible parties can include the vehicle owner, the platform or operating entity, a safety driver (if present), other motorists, and product-related defendants (if applicable). Each case depends on specific facts.

How Comparative Fault Is Argued

Defense themes often include statements such as: “You should have seen the robotaxi.” “You were speeding.” “You entered the intersection unsafely.”

Plaintiff themes focus on right-of-way evidence, the system’s decision timeline, and whether the vehicle’s behavior was reasonable, given what its sensors could detect.

Early evidence preservation and consistent medical documentation can help reduce the impact of blame-shifting tactics.

Insurance Layering After a Robotaxi Collision

Robotaxi operations can resemble rideshare insurance problems. Coverage may depend on trip status, vehicle ownership, and what policies overlap.

Texas Insurance Code Chapter 1954 addresses transportation network company coverage concepts. Even when robotaxi models differ from traditional rideshare, this framework explains the right questions to ask.

Steps to Avoid Coverage Delays

To avoid coverage delays, do the following:

- Confirm all involved policies and entities (such as platform, vehicle owner, commercial coverage).

- Document the trip or app status with screenshots and timestamps.

- Avoid making recorded statements until you understand the involved parties and fault theories.

Don’t assume this is “just like a normal car accident.” In this case, multiple insurers may point fingers at each other while your bills pile up.

What to Do Immediately After a Self-Driving Collision

Your safety and medical attention comes first. Call 911. Accept medical evaluation even if your injuries feel minor. Follow up quickly because some symptoms can be delayed.

Collect evidence on the scene, including:

- Photos and video footage of all vehicles, damages, and road conditions

- Witness contact information

- Vehicle identifiers and any visible platform branding

- Screenshots of any ride receipts or app information

Start an automation fact log. Note what the vehicle did (for example, if it braked late, hesitated, or accelerated unexpectedly). Record any alerts on your screens and what the driver or occupant said. Don’t speculate, just document.

Ask responding officers to include key identifiers in the report, such as the vehicle owner, platform name, and any statements about whether automation was engaged.

Consider a quick legal consultation to send preservation demands before data logs and video footage can be overwritten.

When to Talk to a Texas Crash Attorney

These cases can involve fast-moving evidence, multiple corporate entities, and high-stakes fault arguments. Signs that you may need immediate legal help include:

- Serious injuries

- Disputed right-of-way

- Unclear “operator” story

- Multiple insurers involved

- Rapid corporate outreach after the crash

At Angel Reyes & Associates, we’ve spent over 30 years helping Texas families navigate complex injury claims. We’ve recovered more than $1 billion for our clients. We offer free consultations and work on contingency, meaning you pay no fee unless we win.

Our team can help you preserve critical digital evidence, coordinate with reconstruction experts, track your damages, and negotiate with layered insurers. We have16 locations across Texas and can handle the majority of your case remotely.

Contact us today to discuss your situation. You can also review our case results and client testimonials to learn how we’ve helped others.

Tesla & Waymo Safety FAQs

Does a police report have to say the car was in self-driving mode for a claim to be taken seriously?

No. A claim can still be valid even if the report is unclear about automation, but getting proof may be harder if app records, vehicle identifiers, or company statements were not preserved early.

Can black-box data help after a robotaxi or autonomous-vehicle crash in Texas?

Sometimes. Many vehicles store event data (such as speed, braking, and seatbelt use), but whether that data is available—and whether it captures automation-related details—depends on the vehicle and who controls access.

What if the self-driving vehicle was updated shortly before the crash?

A recent software update can become relevant if it changed braking, detection, or driver-assist behavior, which is why update history is often something investigators try to preserve. It does not automatically prove fault, but it may help explain why the vehicle responded the way it did.

Can a city or road contractor share blame in a Texas self-driving crash?

In some cases, yes. Poor signal timing, missing lane markings, malfunctioning traffic lights, or unsafe road design can contribute to a crash, although claims against government entities usually involve special rules and shorter notice requirements.

If I was a passenger in the robotaxi, do I still need to use my own insurance?

Possibly. Depending on the facts and available coverage, your own PIP, MedPay, or health insurance may help with early bills while liability is being sorted out between the company and other insurers.