Are Self-Driving Cars Safer Than Human Drivers? What Texas Crash Data Says

Every article on this site is researched by our internal team, reviewed for legal accuracy against current Texas law, and held to State Bar of Texas advertising standards before publication. We do not publish content that overstates outcomes or makes promises about results.

Learn more about our

editorial standards .

Key Takeaways

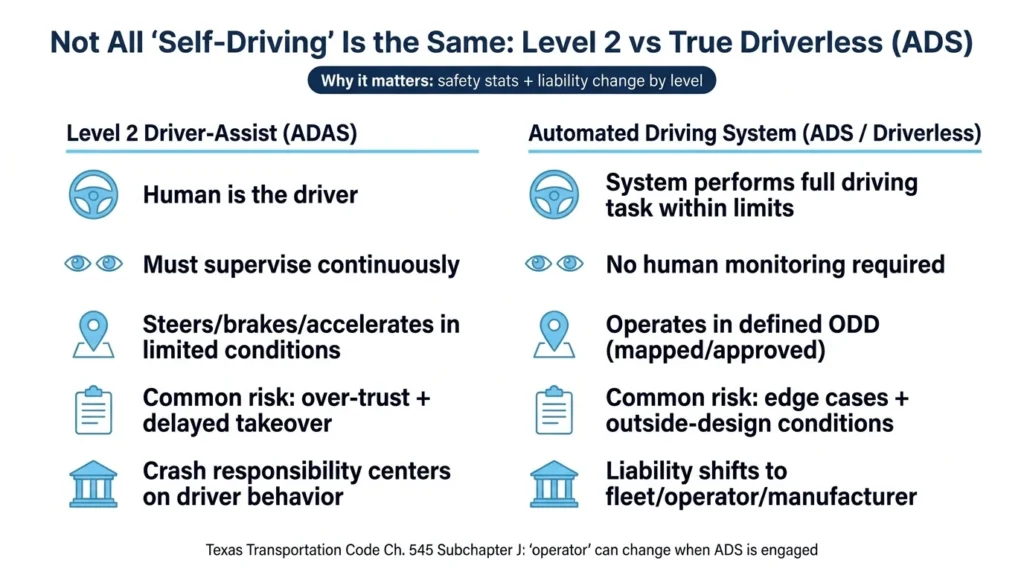

- True driverless systems and Level 2 driver-assist require different safety analyses and create different liability scenarios under Texas law.

- Per-mile crash rates and insurance claims frequency provide more meaningful comparisons than raw crash counts, which lack exposure context.

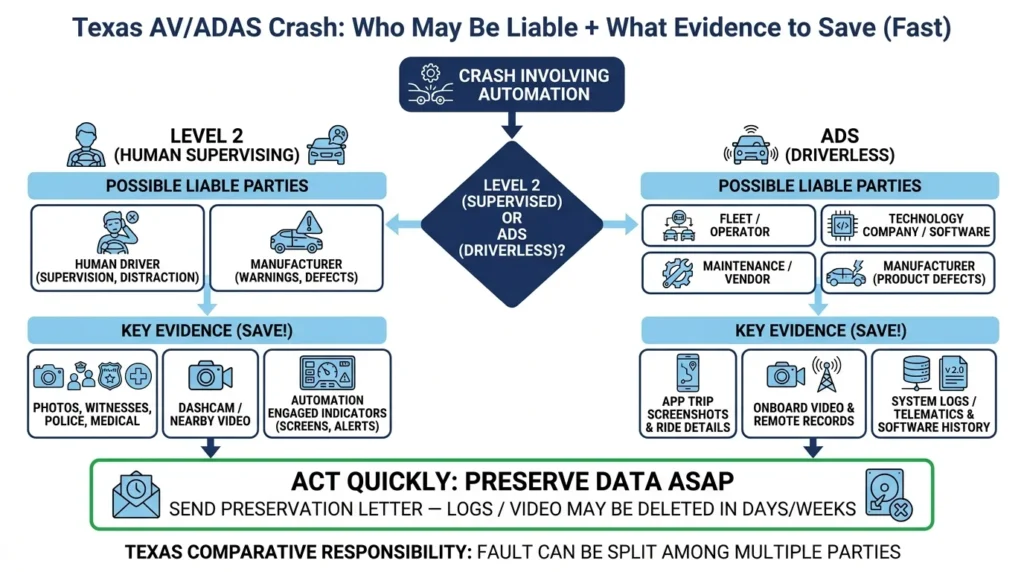

- Texas crashes involving automated vehicles may involve multiple liable parties, and critical evidence like system logs can disappear quickly without prompt preservation efforts.

You were rear-ended while stopped at a traffic light in Lower Greenville last week. The other driver insists their car’s “autopilot” was engaged. Now you’re dealing with injuries, vehicle damage, and an insurance adjuster who keeps asking questions you’re not sure how to answer.

You’ve seen headlines claiming self-driving cars are safer than humans. From your experience, it seems like the opposite is true. Neither helps you understand what happened or who should pay for your medical bills.

The truth is more complicated than any headline suggests. Whether automated vehicles crash more or less than human drivers depends entirely on what you’re measuring, which systems you’re comparing, and how you define “self-driving” in the first place. That being said, we can definitively say a few things:

- Self-driving assists that require human intervention tend to still be more prone to accidents simply from human operator error.

- Crash metrics for fully autonomous vehicles are difficult to compare as not all accidents are identical—some are much worse than others.

- Fully autonomous vehicles tend to see dramatically increased accident numbers when placed in conditions they are not optimized to handle.

“Do Self-Driving Cars Crash More?” Is Harder to Answer Than It Sounds

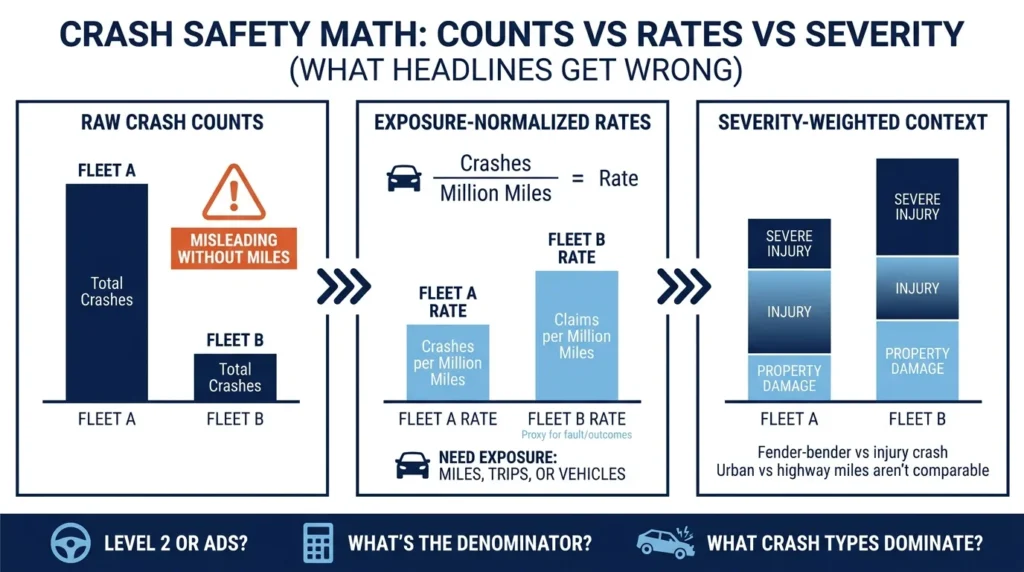

Most crash statistics you’ll find online mix two very different things:

- They often lump together true driverless vehicles with driver-assist systems that still require human supervision

- They compare raw crash counts instead of crash rates per mile driven

A system that drives 25 million miles will have more total crashes than one that drives 100,000 miles. That doesn’t mean it’s less safe; it means it drove more. The meaningful question is how often crashes happen relative to exposure.

For those injured in crashes involving any level of vehicle automation, understanding these distinctions is vital. How automated a vehicle is and the nature of that automation affects how liability gets assigned, which parties may be responsible, and what evidence you’ll need to support your claim.

Level 2 Driver Assist vs True Driverless Operation

The term “self-driving” gets applied to systems that work very differently. Level 2 driver assist (like Tesla’s Autopilot or GM’s Super Cruise) can steer, accelerate, and brake under certain conditions. However, the human behind the wheel remains the driver who must supervise continuously and intervene when needed.

True automated driving systems operate differently. When engaged within their designed limits, the ADS performs the entire driving task. No human needs to monitor the road.

Texas law recognizes this distinction. Texas Transportation Code Chapter 545, Subchapter J defines “automated motor vehicle” and establishes who counts as the “operator” when an ADS is engaged. The operator designation affects police reports, insurance claims, and potential litigation.

When a Level 2 system is involved in a crash, the human driver typically bears responsibility for supervising the system. When a true driverless robotaxi crashes, the analysis shifts toward the fleet operator, the technology company, and potentially maintenance vendors. Real cases often involve multiple parties even when the public imagines a single “driver.”

Measuring Crash Risk: Counts vs Rates vs Claims

Raw crash counts tell you almost nothing useful about safety. A fleet that operates 24 hours a day across a major metro area will accumulate crashes simply because it accumulates miles.

Exposure-normalized metrics provide better comparisons:

- Crashes per million miles driven accounts for how much each system actually operates

- Insurance claims per million miles uses claims as a proxy for crashes where someone was at fault

- Severity-weighted rates account for whether crashes caused injuries or just minor damage

Even per-mile statistics can mislead if the miles aren’t comparable. Urban miles in stop-and-go traffic create different crash opportunities than highway miles at steady speeds. A robotaxi operating exclusively in dense neighborhoods faces different risks than a human driver commuting from Plano to downtown Dallas on US-75.

It’s also important to consider reporting thresholds. Some datasets capture every minor fender-bender, while others only include crashes above certain damage thresholds or those involving injuries. Low-speed rear-end collisions (where another driver hits the automated vehicle from behind) appear frequently in some AV datasets and may reflect the AV driving more cautiously than surrounding traffic rather than the AV causing problems.

What NHTSA’s Crash Reports Actually Show

The National Highway Traffic Safety Administration’s Standing General Order requires manufacturers and operators to report qualifying crashes involving automated driving systems and certain Level 2 driver-assist features. This database gets cited constantly in news coverage.

The NHTSA itself acknowledges significant limitations in this data:

- Reports may contain duplicates

- Crashes sometimes get miscategorized between Level 2 and true ADS

- Different manufacturers have different reporting practices

The biggest limitation for answering “do self-driving cars crash more?” is that the SGO data typically lacks consistent exposure information across all systems. You can see how many crashes were reported, but you cannot easily determine how many miles each system drove. Without that denominator, you cannot calculate meaningful crash rates.

When you see a headline claiming automated vehicles had a certain number of crashes, ask three questions:

- Was the system Level 2 (human supervising) or true ADS (driverless)?

- What’s the denominator (miles, vehicles, trips)?

- What crash types dominate the data, and does that reflect your driving environment?

What Large-Scale Robotaxi Studies Suggest

The most detailed public data on true driverless operation comes from Waymo. A Swiss Re study examining 25.3 million fully autonomous miles through July 2024 used insurance claims frequency as its primary metric. This approach measures outcomes where someone filed a claim, which correlates more closely with fault than raw crash counts.

The study attempted to control for geography by comparing Waymo’s performance to human drivers in the same ZIP codes where Waymo operates. They found that crash rates vary dramatically by location. For example, comparing a robotaxi crash data in San Francisco to average national driving statistics would be misleading.

A separate academic analysis of 7.1 million rider-only miles examined how different methodological choices affect conclusions. Depending on which benchmarks you use and how you handle various crash types, the same underlying data can support different “safer” or “less safe” conclusions.

These studies provide evidence about one specific driverless fleet operating in defined conditions. They do not automatically apply to all automated vehicles, to consumer cars with driver-assist features, or to how these systems might perform in conditions they weren’t designed for.

Level 2 systems show different crash patterns because humans still make driving decisions and may over-trust the technology. A driver who believes their car is “self-driving” when it actually requires constant supervision creates risks that don’t exist with true driverless operation or traditional human driving.

Texas Liability When Automation Is Involved

Statistical safety debates are less important to crash victims than practical questions about compensation. Even if a system is safer on average, individual crashes still cause real injuries. Texas law provides several potential paths to recovery.

Negligence claims can apply when a human had a duty to supervise (Level 2 systems) or when a company’s practices fell below reasonable standards. If a driver was scrolling their phone while their car’s driver-assist was engaged, that driver may bear responsibility.

If a robotaxi operator knowingly failed to change out a faulty sensor, and that sensor then directly caused a crash, the operator may be liable.

Product liability claims can apply when a design defect, manufacturing defect, or inadequate warnings contributed to the crash. These claims typically target manufacturers rather than individual drivers.

Multiple defendants are common in automation-related crashes. A single collision might involve the human driver, the vehicle manufacturer, the software developer, the fleet operator, a maintenance vendor, and the driver of another vehicle. Texas uses comparative responsibility, meaning fault gets allocated among all responsible parties.

For crashes involving driver-assist systems, insurers will ask whether the system was engaged, what it prompted the driver to do, and whether the driver’s hands were on the wheel. For robotaxi crashes, they’ll want to know which app was used, who operated the vehicle, and what records exist from the trip.

What Evidence Is Needed in Automation-Related Claims?

AV and ADAS cases often become evidence fights. Critical data may be controlled by companies with retention policies that delete information after days or weeks. Acting quickly is key.

Standard crash evidence applies: photos of the scene, witness contact information, police reports, and medical records documenting your injuries. Automation-related crashes require additional documentation:

- Screenshots of any ridehail or robotaxi app showing your trip

- Any indication of whether automated features were engaged

- Dashcam footage from your vehicle or nearby vehicles

- Identification of any cameras at the intersection or on nearby buildings

A preservation letter sent to the vehicle manufacturer, fleet operator, or technology company can prevent deletion of system logs, video footage, and remote assistance records. Waiting weeks to contact an attorney can mean this evidence no longer exists.

Insurance adjusters will ask pointed questions designed to shift responsibility. Before giving a recorded statement about what the “autopilot” was doing or whether you were paying attention, understand that your answers may affect your claim significantly.

How We Can Help

At Angel Reyes & Associates, we’ve spent over 30 years helping Texas families navigate complex injury claims. We understand that crashes involving automated vehicles raise questions traditional car accident cases don’t, such as:

- Who programmed the system?

- Who maintained it?

- Who should have been watching the road?

Our team has offices across Texas and can handle most of your case remotely. We offer free consultations and work on contingency, meaning you pay no fee unless we recover compensation for you.

If you’ve been injured in a crash involving any level of vehicle automation anywhere across the state of Texas, contact us today to discuss your situation. The evidence that you need to win your case may not exist for long.

Self-Driving vs. Human Driving Safety FAQs

Can a self-driving car be used legally in Texas without someone sitting behind the wheel?

Texas law generally allows an automated motor vehicle to operate without a human driver present if the vehicle meets the statute’s requirements, including having the ability to comply with traffic laws and carry minimum financial responsibility.

Will my own insurance cover me if I was hurt while riding in a robotaxi?

It may, depending on your policy and the type of coverage you carry, such as MedPay, PIP, or uninsured/underinsured motorist coverage. In some cases, more than one policy may be relevant.

Can a black box or vehicle data recorder show whether automation was turned on?

Sometimes, yes, but the available data varies by vehicle and system. In automation-related crashes, useful records may also include app trip logs, onboard video, telematics, and company-maintained system logs.

Does a recall automatically mean the manufacturer is responsible for the crash?

No. A recall can be important evidence, but you still have to show the recalled problem actually contributed to the collision or your injuries.

What if the self-driving system was being updated or had recently changed software before the crash?

Software version history can become a key piece of evidence because updates may affect how the system detects hazards, brakes, or responds to road conditions. That does not automatically prove fault, but it can become an important part of the investigation.